Dr GPT (the data edition)

Back in December, I wrote a blog titled Dr GPT, outlining my experience fighting a seasonal cold/flu and how clear it was to me that ChatGPT (or pick your favourite other LLM) was going to become the first port of call for the vast majority of consumer health enquiries.

Fast forward less than a month, and Open AI has released OpenAI: AI as a Healthcare Ally outlining some pretty incredible statistics on how far along that path America already is.

Amongst other intriguing facts and figures:

- over 5% of all ChatGPT messages globally are about healthcare

- 1 in 4 WAUs ask about healthcare each week

- over 40m WAUs globally prompt about healthcare every day

- 60% of adults in the US say they’ve used AI tools for their health or healthcare in the past three months

This is incredible adoption given the first ever version of this product was released not even 4 years ago. And I can't help feeling pretty inspired and optimistic about the massive potential this technology has to broaden access to potentially life-saving - or at the least, life-improving - advice (the paper also highlights the adoption amongst overserved parts of America).

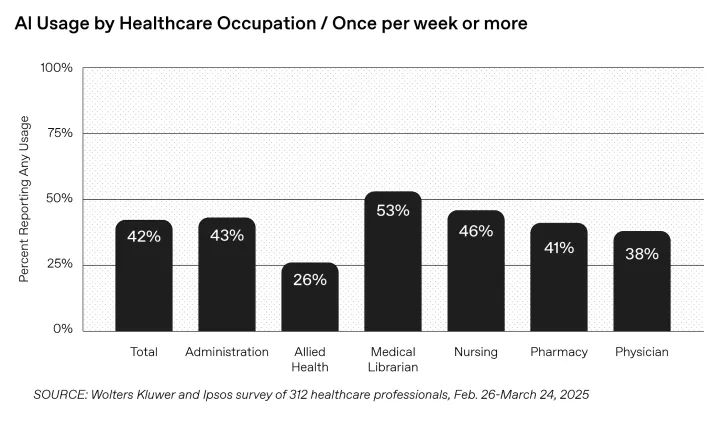

The other striking fact was how prevalent usage is amongst healthcare professionals:

Image from Open AI: AI as a Healthcare Ally, January 2026

Image from Open AI: AI as a Healthcare Ally, January 2026

Putting these together, it's clear that AI is already having a phenomenal impact across the entire healthcare system. And it's likely to only go in one direction as the converging powers of (i) adoption; (ii) memory; (iii) model advancements; (iv) infrastructure build-out; and (v) social acceptance all drive AI deeper and deeper into our everyday lives.

It does, though, raise some questions that I would love to see data on:

- How accurate is the advice that ChatGPT is giving? What is the rate of false positives ("you have X", when you don't) and false negatives ("nothing to worry about", when there is)?

- How can we know as patients that our data is being used appropriately by healthcare professionals? The models are 10, 100, 1000x better with appropriate context, so I find it hard to believe patient information isn't being uploaded to these models to help practitioners with their queries

- How does this impact the market for healthcare? Sure, it drops costs on the diagnostic phase, but what if 100x more diagnoses happen as a result - does that shoot the cost of the next stages of the journey up disproportionately? Removing supply-side constraints in one part of a chain simply shifts them along to the next bottleneck

- How does this compare in other markets outside the US? It's notable many of the queries were related to complex insurance policies and claims. Are people truly using Dr GPT, or are they using it to parse reams of small-print to figure out if (or, more likely, how) they are getting screwed by insurers?

- What does all this mean for start-ups? If we take as true that models improve with (i) user feedback/reinforcement; (ii) accumulated user context (e.g. medical history) and (iii) cross-user data, how the hell does one compete with ChatGPT, Gemini, et al. in the long-run?

I have more questions than answers, but it's a fascinating structural shift that we have to pay close attention today, regardless of the victors and casualties (pun intended)...